The Difference between Traditional and Next Gen Vulnerability Management

Vulnerability management is comprised of tests and procedures taken to identify future or recurring threats that need to be identified and dealt with. It is mostly common in computer systems and other chip operated machines that need close monitoring all the time.

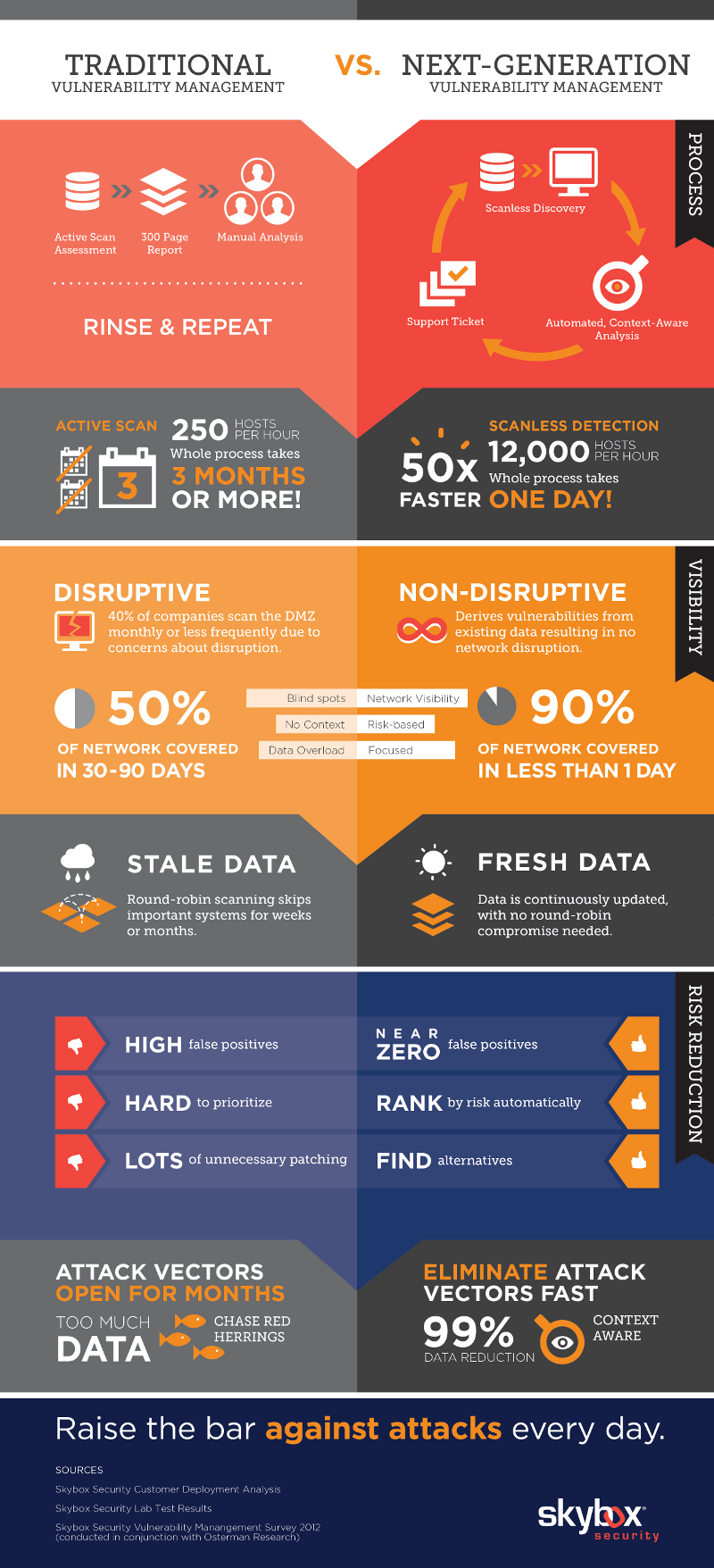

There are two type of vulnerability management today, though one is outdoing the other fast, traditional and next gen vulnerability management. Although these two work in an almost similar manner, there are different ways in which they function, as well as efficiency. Here are some of the most notable and shouting differences.

Traditional Vulnerability Management

A traditional vulnerability management requires active scan assessment which thus generates reports to be manually analyzed by technicians to identify a fault. This is a tiring process and takes days before a threat can be identified, then dealt with. With next-gen vulnerability management, any faults or compromised devices or ports are identified automatically. Everything is automated, and manual analysis is not needed. With the scan less discovery of faults, systems can run efficiently for longer, and without any disruptions as some of the repairs are automated too.

Traditional vulnerability management is disruptive as compared to the non-disruptive nature of next gen systems. Most of the systems that use this traditional method of identifying vulnerabilities only scan their systems once in a year, and this could have caused breaches everywhere. It also means that, the scanning time, together with the time taken to analyze and rectify a problem can disrupt a company’s operation for more than 3 days. Next Gen vulnerability management however extracts data from vulnerable ports or systems and sends signals for administrators to work on it. This means that more area is covered within a day than when using traditional means, thus very few service disruptions.

Next Gen Vulnerability Management

Next gen vulnerability management deals with fresh data generated on scheduled times, where the systems automatically detect defects. The traditional version however may deal with stale data, which has been in hard copies for longer, meaning some of the vital information may be missed in the process. It also means that the efficiency and accuracy in the analysis procedures is not top notch, thus producing high false positives.

The times taken to identify and eliminate vectors in both systems vary a lot. With the traditional vulnerability management, it may take months before one vector is detected and eliminated. This means that, if it is a data company running under this system, most of its data will be compromised by the time the notice it, and this could be used against them. Next gen management systems however have 99% vector detection within the shortest time possible. This means everyone using the system, whether technical or not is well covered and assured of safety at the end of the day.

Privacy and data safety is a key aspect that needs to be checked into by cooperates and big companies. A strong system to handle all this security details and features is required to assure workers safety and reliability and only the next generation vulnerability management systems can handle and take care of this.

Although millions of people visit Brandon's blog each month, his path to success was not easy. Go here to read his incredible story, "From Disabled and $500k in Debt to a Pro Blogger with 5 Million Monthly Visitors." If you want to send Brandon a quick message, then visit his contact page here.